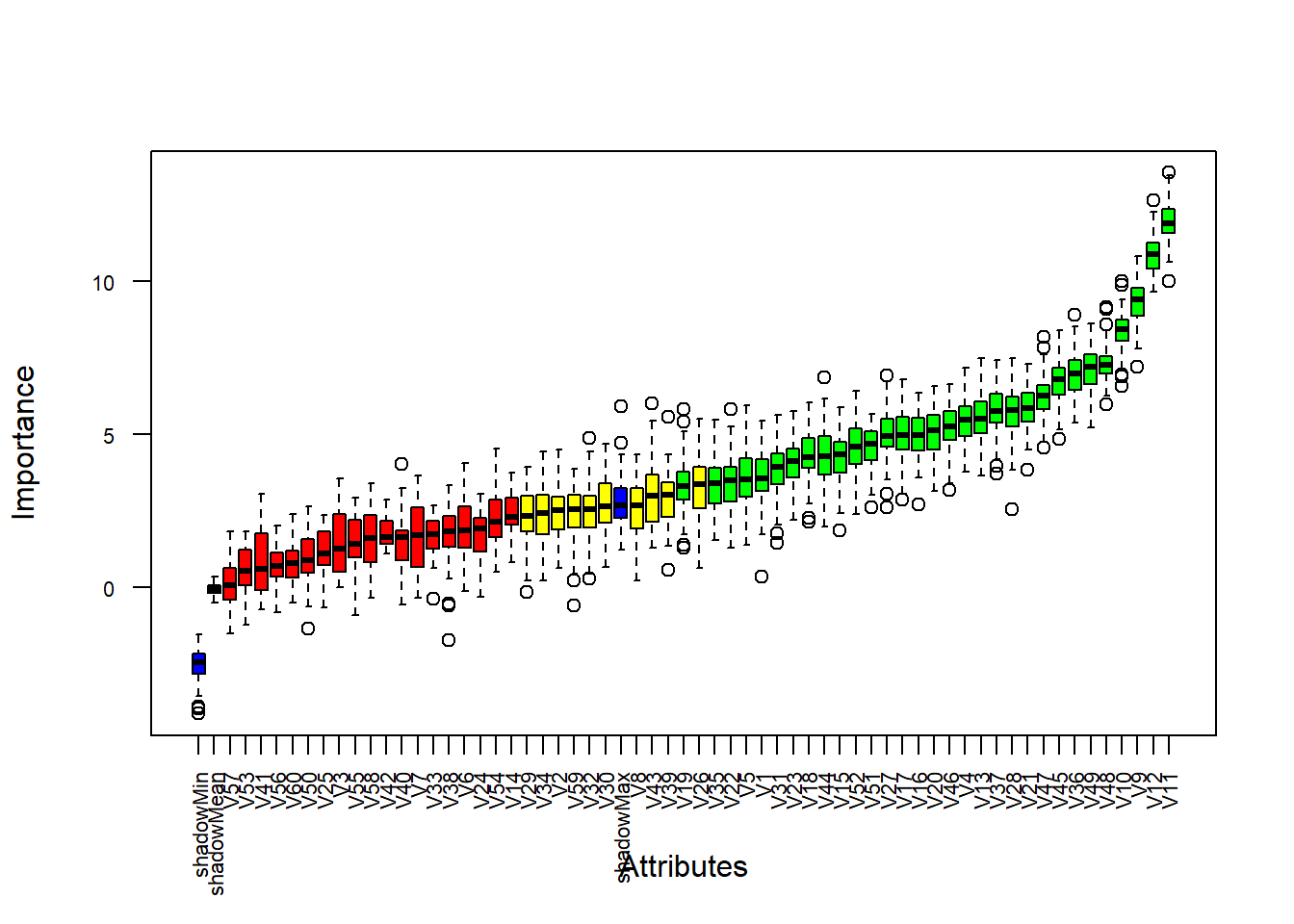

for each set of (train, validation, test) data I have trained a Random Forest classifier from scikit-learn with n_estimators=1000 (other hyper-parameters are left default).for each train data, I additionally split it into train and validation with 5-fold CV,.each data set has defined train and test split (with 10-fold CV),.Training the Random Forest Classifiers with Scikit-Learnįor each train and test split I added 5-fold cross-validation (internally), so I have a validation data which can be used to tune the number of trees. Eeach data set in the benchmark suite has a defined train and test splits for 10-fold cross-validation. Most of the datasets have up to few thousands rows and up to hundred columns - they are small and medium sized datasets. In the plot below are presented distribution of the number of rows and columns in the datasets, and scatter plot with data sizes: Each dataset were carefully selected from thousands of data sets on OpenML by creators of the benchmark.

The data sets used in this study are from OpenML-CC18 benchamrk. If you have any questions, you can email me leave a comment. The link to all code in Jupyter Notebook. when tuning the number of trees in the Random Forest train it with maximum number of trees and then check how does the Random Forest perform with using different subset of trees (with single tree precision) on validation dataset (code example below).The more rows in the data, the more trees are needed (the mean of the optimal number of trees is 464), the optimal number of trees in the Random Forest depends on the number of rows in the data set.the best performance is obtained by selecting the optimal tree number with single tree precision (I’ve tried to tune the number of trees with 100 trees and single tree step precision),.I have trained 3,600 Random Forest Classifiers (each with 1,000 trees) on 72 data sets (from OpenML-CC18 benchmark) to check how many trees should be used in the Random Forest.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed